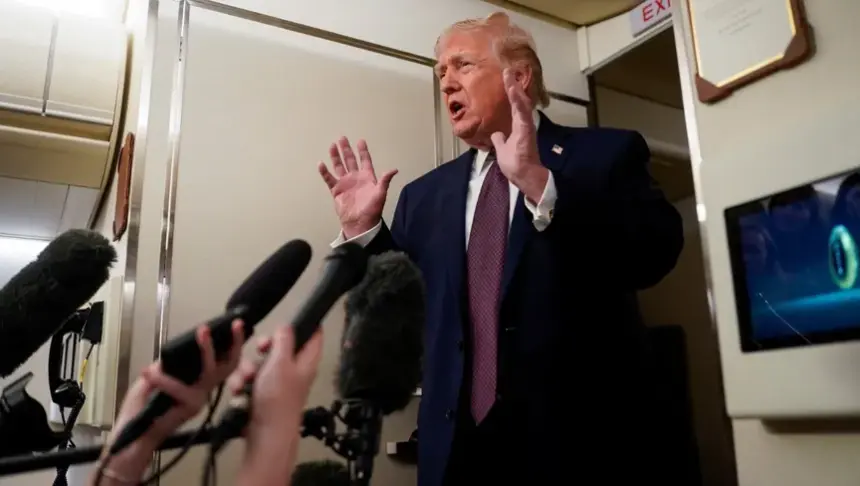

President Donald Trump has directed all US federal agencies to immediately cease the use of artificial intelligence products developed by Anthropic PBC, escalating a high-profile dispute between the company and the Pentagon over safeguards and usage restrictions.

In a statement posted on social media on Friday, Trump accused Anthropic of attempting to “strong-arm” the Defense Department by enforcing its own terms of service rather than deferring to US government authority. He announced a six-month phase-out period for agencies currently using the company’s technology, including the Department of Defense, and warned that the firm could face unspecified “major civil and criminal consequences” if it does not cooperate with the transition.

The decision threatens to disrupt up to $200m in government work previously awarded to Anthropic, spanning military and civilian contracts, including engagements with the State Department. The move also introduces uncertainty around the Pentagon’s access to advanced AI tools, particularly in classified environments where Anthropic’s systems have played a key role.

At the center of the dispute is Claude, Anthropic’s flagship AI chatbot. Defense Secretary Pete Hegseth had reportedly given the company a deadline to allow the Pentagon to deploy Claude for any lawful purpose, without restrictions imposed by Anthropic. However, the company has maintained that its models should not be used for mass domestic surveillance or fully autonomous weapons systems.

Founded with a stated mission to prioritize the responsible development of artificial intelligence, Anthropic has positioned itself as a leader in AI safety. Chief Executive Officer Dario Amodei reiterated this week that the company could not “in good conscience” agree to the Pentagon’s demands if they involved removing core usage safeguards.

The confrontation unfolds as the US military accelerates efforts to integrate advanced AI across operations. A recently published Pentagon strategy calls for transforming the armed forces into an “AI-first” institution, emphasizing experimentation with frontier models and reducing bureaucratic constraints. The strategy also encourages selecting AI systems “free from usage policy constraints” that could limit lawful military applications.

Anthropic’s removal could present operational challenges. Until recently, it was the only AI provider authorized to operate within certain classified Pentagon cloud environments. Its specialized government product, Claude Gov, has reportedly been widely used by defense personnel due to its functionality and ease of deployment.

The dispute also highlights intensifying competition among AI firms for federal contracts. Rivals including OpenAI, Google’s Gemini, and Elon Musk’s xAI are actively pursuing classified and defense-related work. xAI recently secured approval for classified operations, further shifting the competitive landscape.

Beyond the immediate contractual implications, the episode underscores broader tensions between Silicon Valley and Washington over the governance of powerful AI systems. Technology workers at several major firms have publicly opposed unrestricted military use of AI tools, reflecting growing ethical debates within the industry.

Anthropic is reportedly preparing for a potential initial public offering later this year, as it seeks to justify a valuation estimated at $380bn. The loss of federal business may complicate its revenue outlook, though the company continues to expand its commercial customer base.

For now, the six-month transition period sets the stage for further negotiations. Defense officials have indicated they remain open to dialogue, but the clash signals a pivotal moment in defining the balance between national security priorities and AI safety principles in the United States.